You can now talk to my front garden

A small experiment with air quality data and AI agents and why it points at something bigger.

A few years ago, I collaborated on a project called Natural Networks. The idea was to connect environmental data (in this case, water quality at points on the canal network) with machine learning, to prompt us to reconsider our relationship to natural spaces.

The ML part, at the time, was a recurrent neural network trained on literature, generating poetry from sensor data. The outputs were strange and abstract: grammatically clunky, occasionally beautiful, not quite making sense.

The approach – what does it mean to connecting environmental data to systems that can interpret and act upon it has stayed with me.

The tools available then made the answer more philosophical than practical. But fast forward a few years and the potential of this has only increased.

Making environmental data useful

At Agreena, I spent a couple of years working on products built around agricultural and environmental data, linking soil carbon, crop productivity, field practices and finance to enable more resilient and sustainable farming. One of the recurring tensions in that work was the gap between data that existed and data that was actually useful to the people making decisions on the ground.

As Dieter Helm puts it: "Putting in the hands of farmers the tools to see in real time what is happening to their soil, their crops, their water supplies, the run-off, and the water contaminants opens up great scope to adapt to the new farming future."

While the domain changes; agriculture, water, air quality urban infrastructure – the core challenge remains. Data without application is just evidence. The interesting work is putting that into context, in the right form, connected to something that can change.

The front garden project is a small experiment to close that gap in a specific, concrete way.

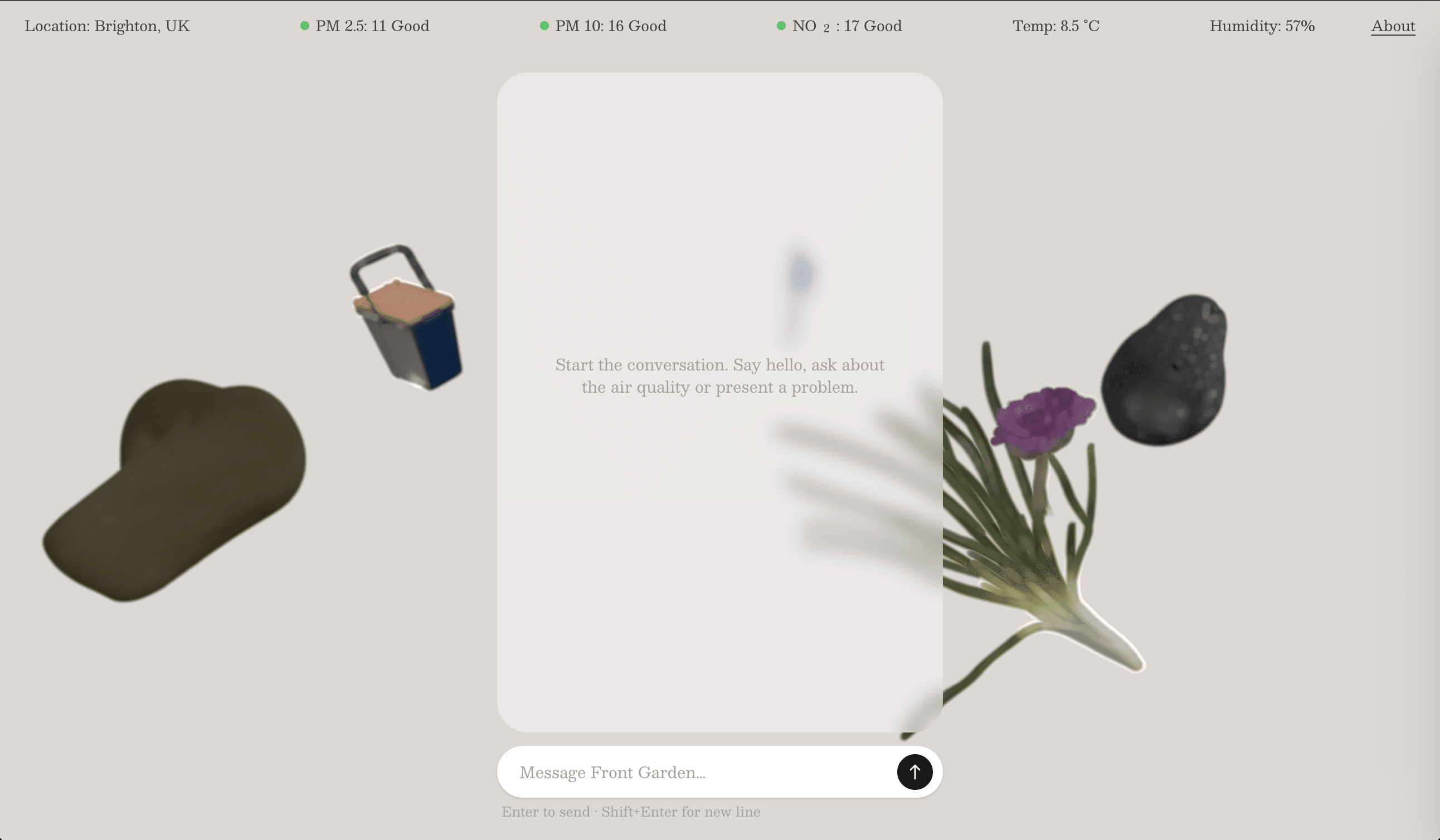

There's an air quality monitor outside my house feeding a conversational agent in near real time. You can ask it what the air is like right now. You can ask it why particulates tend to spike on still days. You can ask it how today compares to the same time last year. The sensor data isn't sitting in a dashboard waiting to be interpreted, it's connected to something that can reason about it, explain it, and respond to questions.

A single sensor in a Brighton front garden is just an experiment. But the same architecture applies at neighbourhood scale, and I'm already running a version of that a few streets away.

From garden scale to city scale

The Elm Grove Air Quality Project monitors NO₂ levels near a primary school, where traffic during drop-off and pick-up pushes concentrations well above WHO guidelines. The goal is to make that data genuinely useful to the people it affects rather than leaving it in a dashboard that nobody checks. The same question as before: not whether the data exists, but whether it can be connected to something that helps people understand and act on it.

The broader opportunity feels significant and still largely untapped. Hardware is getting cheaper and more capable, products like Ambient Works show there's already a maker and professional appetite for dense, portable environmental sensing. The missing layer isn't the sensors. It's the intelligence that sits above them: AI that can reason about what the data means, connect it to context, and feed into systems that can actually act on it.

That might mean responsive buildings that adapt to occupancy and air quality in real time. Neighbourhood monitoring networks that give communities the same environmental intelligence currently only available to researchers. Urban infrastructure that doesn't just log what's happening but responds to it. The pieces; affordable hardware, capable models, agentic frameworks are there. The design and integration work to make it useful and accountable rather than just technically possible is where the interesting problems are.

A note on process:

I’ve been using projects like this to try out new design/build workflows – and it’s been a lot of fun. The sensor enclosure was modeled in Blender using Claude MCP and 3D printed with help from the good people in the workshop at Plus X in Brighton. The 3D models on the website are based on photos of the actual stuff in my garden, made with Krea. The site was made in Claude Code and some iterating in Paper. The agent itself was built following this tutorial and is running on Claude.

Chat with my garden here: https://garden.jamescuddy.co.uk/